Context windows are a scam.

OpenAI and Anthropic sell you a lie. They promise infinite memory. They promise seamless workflows. But the second you start a new chat, your AI forgets everything. You paste the same rules, the same briefs, and the same context, over and over again.

It is exhausting.

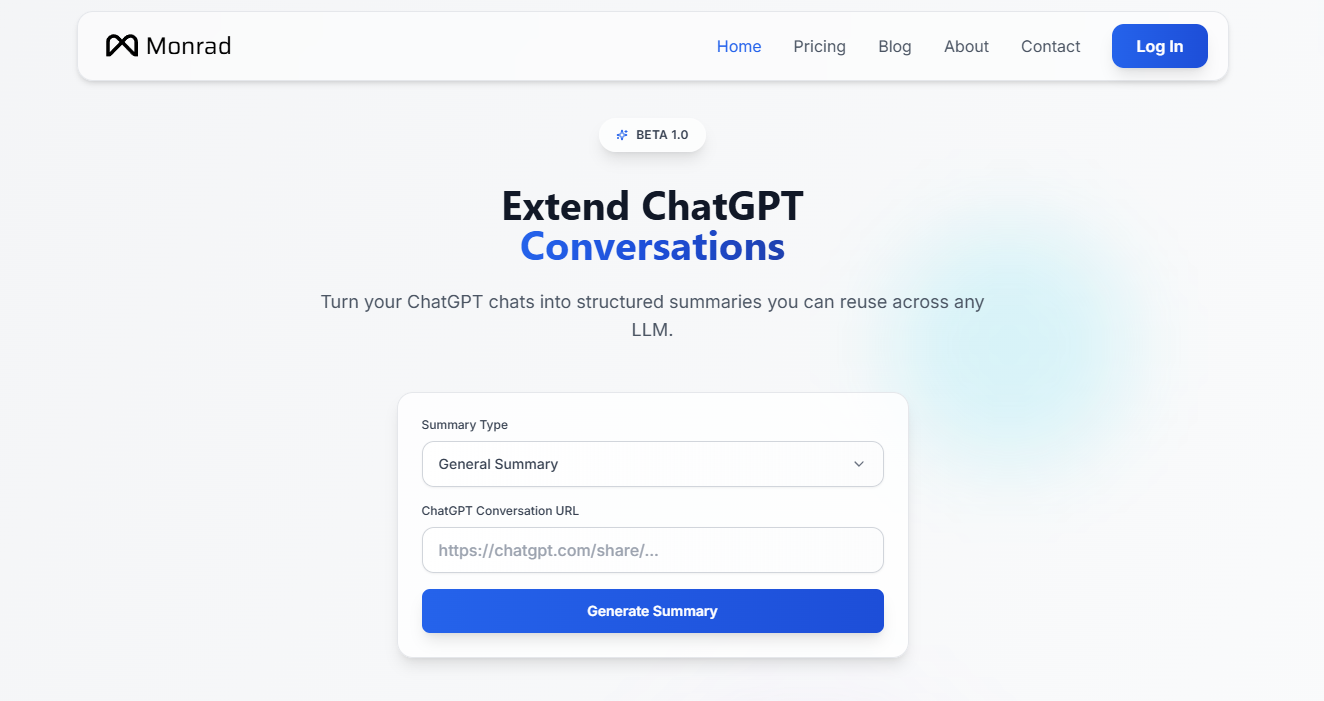

Here is the deal: Monrad fixes this. It builds a persistent memory layer for your AI sessions.

You take a link from a massive, sprawling ChatGPT conversation. You drop it into Monrad. The tool rips out the fluff and generates a structured summary. A clean, reusable artifact.

Why does this matter?

Because starting from scratch costs time. Time is money.

Stop Pasting The Same Prompts

Most people treat AI like a goldfish. They feed it a little bit of information, get an answer, and wipe the bowl. They spend ten minutes every morning reminding the machine how they write.

Smart operators build assets.

Monrad lets you convert chats into specific formats. You build character profiles, project briefs, or complex study guides. You capture the exact nuances of a 50-prompt deep dive. You export that hard-earned knowledge. Then, you inject it into Claude, Gemini, or a fresh ChatGPT window.

The context stays intact. The AI knows what you want immediately. It picks up exactly where the last model left off.

How The Memory Layer Works

You do not need to code. You do not need to install complex plugins or manage API keys.

You grab a shared ChatGPT link. You select the summary type. Monrad does the heavy lifting.

It reads the entire thread. It isolates the core decisions, established facts, and specific styles. It spits out a dense, formatted text block. You take that block and paste it anywhere.

But there is a catch.

You have to actually build good context first. Monrad cannot save a garbage conversation. If your chat is weak, your summary is weak. Feed it high-value interactions. Push the AI to establish firm rules. Then, let Monrad distill those rules into a portable format.

The Multi-LLM Reality

We live in a multi-model world.

Loyalty to one AI company is foolish. Sometimes ChatGPT writes better Python scripts. Sometimes Claude writes better sales copy. You need to move context between these tools fast.

Monrad acts as the bridge. You extract the brain from one model and transplant it into another. No friction. No lost details.

Your AI workflows become modular. You build a library of past successes. You store project histories, coding environments, and brand voices. You deploy them when needed.

Own Your Context

OpenAI wants to lock you in. They build their own memory features to keep you on their platform.

You must reject this.

When you control your context, you control your workflow. Monrad gives you portability. You decide where your data goes. You decide which model gets to process it.

This is how you scale output. You stop repeating yourself. You stop fighting the blank prompt. You start commanding the machine with precision. Get your context out of the chat log. Turn it into a tool.